The performance of Obsidian is something I care about deeply since I have a rather largish Vault. So perhaps I can give a real-world example.

My Vault as of yesterday had 8,641 .md files and 922,334 words. My computer is rather oldish (5+ years): Windows 7 64-bit, SSD drive, 8GB RAM, and an AMD A8-5600k 3.6Ghz.

I use Obsidian every day, including weekends and throughout the day. For me the performance of Obsidian is good. Compared with other apps like Zettlr the performance is great.

(I actually left Zettlr because it could not manage my Vault size when I had around half my files of now.)

Searching goes rather quick for me (<3 seconds). Files open instantaneously, both when navigating and through the file opener (ctrl + o). Typing the name of a file goes quick too, so no issue with the auto-complete window or auto-search.

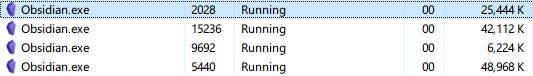

Creating new files also goes quick. I don’t experience a typing lag (not compared to other programs). The memory usage of Obsidian is also rather lowish (for an Electron app, of course). And Obsidian hasn’t crashed on me or did funny things with files.

The only performance issue I found is that creating a new file with the file explorer window open is slow, since Obsidian redraws the file explorer window when making a new file. So now I simply create new files with the search window open, which is already my usual workflow.

All in all the performance of Obsidian is great and has remained so even with my Vault increasing every day. I think the Obsidian developers are great experts, because in the last months we also had updates that improved performance. (Which is opposite to what usually happens when more features are added.)

(By the way, when I open my Vault in Visual Studio Code opening files and searching goes remarkably slower. So Obsidian beats the well-funded Visual Studio Code team in terms of performance!)

So from my perspective there’s nothing to worry about regarding the performance of Obsidian.  (But I of course do save for a new computer!)

(But I of course do save for a new computer!)

so CPU should be rather OK.

so CPU should be rather OK.

(But I of course do save for a new computer!)

(But I of course do save for a new computer!)