What I was trying to do

I built a formal ontology system in _ontologies/ with relationship definitions (regulates, part_of, provides_service, etc.), type hierarchies, and domain-specific concepts. The idea was that AI agents (I’m using GitHub Copilot) could leverage these formal structures to answer complex queries about my vault.

Turns out… they can’t. At least not without a lot of extra work.

My setup

- 315 meeting notes with good YAML metadata (type, topic, date, org, contacts)

- 1111 people notes, again, good frontmatter

- 37 organization notes, good frontmatter

- Ontology definitions in

_ontologies/core/and domain folders - Templates via Templater

- Dataview for queries

Everything has consistent metadata and wiki-links. The ontology defines relationships like [regulates::[[target]]] using inline field syntax, but I only use those here and there where appropriate to the text I’m writing… not consistently.

The test

I asked Copilot: “Find XXX from YYY we met with last year to show them the ZZZ, even though I’m sure I didn’t create a formal record for them”

What it actually used to find them:

- semantic_search (found relevant context)

- grep_search (pattern matching org names)

- Python script I’d written earlier

- YAML metadata

- Wiki-links

What it ignored completely: The entire freaking ontology system.

Copilot’s suggestion

“Just add inline fields to your entity notes! Put [works_at::[[Org Name]]] in people files and [does_that_thing_to::[[Entity]]] in org files. Then I can query these relationships via Dataview.”

Here’s what that would look like:

---

type: "[[Organization]]"

org_type: Consulting Firm

---

[specializes_in::[[Technology Strategy]], [[Data Architecture]]]

[part_of::[[Parent Company Group]]]

[serves::[[Government Agencies]], [[Private Sector]]]

[has_location::London, New York]

[partners_with::[[Tech Vendor A]], [[Research Institute B]]]

[related_to::[[Competitor X]], [[Industry Association Y]]]

## Description

A mid-sized consulting firm focusing on digital transformation

and data strategy for public and private sector clients.

## Related People

[Dataview query showing everyone who works here]

Sounds good except:

- I’d need to add these to 1100+ people and 37 orgs and maintain them (I guess easy to do w/ AI but token-expensive and super ugly)

- I don’t know what queries I’ll want in the future, so I feel like I’d constantly be adding my ontology for every new question I have. It would be different if I had a repository of 1000s of questions I might be having…

- Temporal context gets lost (20 meetings with someone over 2 years becomes just

[met_with::[[Person]]]20 times)

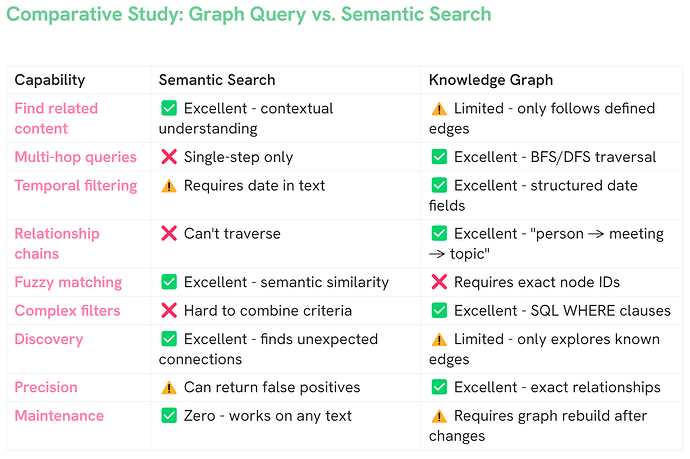

After I questioned it about this, it had a revised take: “Actually your current setup probably works fine. semantic_search handles relationship discovery without needing formal structure. Your ontology helps you organize thinking but doesn’t help me answer questions.”

In other words, your ontology doesn’t help me at all, which feels anticlimactic after building the whole thing…. and just feels……. wrong?

My question

Am I missing something? Has anyone gotten AI assistants to actually use formal ontology structures successfully? Did inline fields help?

I built this ontology thinking it would make the vault more queryable, but maybe it’s just documentation for me and doesn’t help the AI at all. Curious if others have tried this and what they learned.