Things I have tried

I have been searching the whole wide Internet, but couldn’t come up with a solutions that fits my idea.

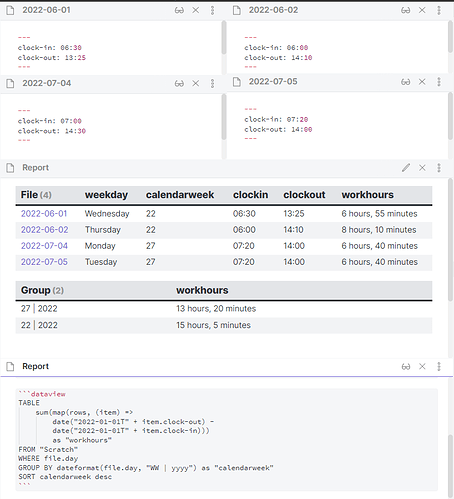

What I have so far is the following:

table

dateformat(file.day, "cccc") as "weekday",

dateformat(file.day, "WW") as "calendarweek",

clock-in as "clockin",

clock-out as "clockout",

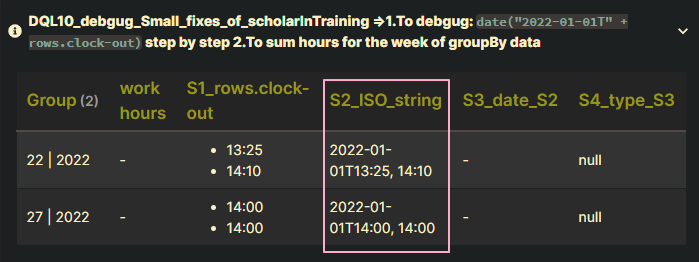

date("2022-01-01T" + clock-out) - date("2022-01-01T" + clock-in) as "workhours"

from "Calendar/days"

sort file.name desc

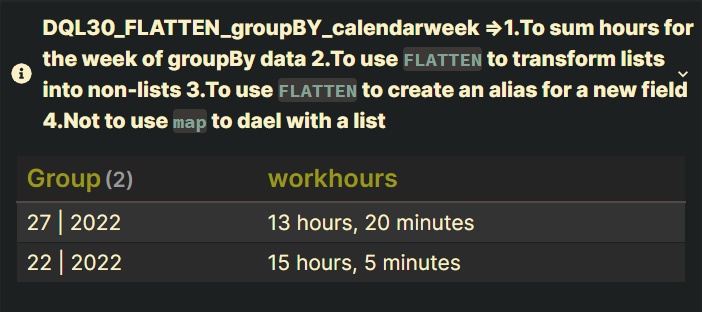

table

date("2022-01-01T" + clock-out) - date("2022-01-01T" + clock-in) as "work hours"

from "Calendar/days"

group by dateformat(file.day, "WW | yyyy") as "calendarweek"

sort desc

What I’m trying to do

Everyday I arrive at work and I write down my time in a daily note in frontmatter as “clock-in” and when I leave I also note the time as “clock-out”.

My company expects me to work exactly 38.5 hours a week. From Monday to Thursday I have a break for exactly 45 minutes.

Usually I have to come to work at 07:00 and have to leave at 16:00, except fridays I leave earlier at 12:30.

However I work overtime sometimes and I want to know how many hours per day/week/month/year I have worked overtime, so I can know when to ask for time compensation.

I have an extra Note, where I want to keep track of all this information.

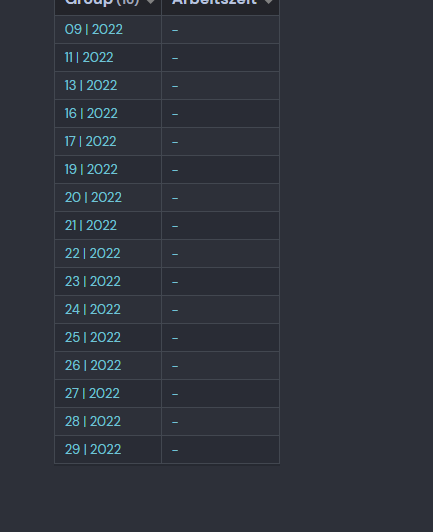

This is how it looks:

I havent figured out, how to achieve this work hour tracking, so I am asking you.

Additional Information:

I also want to track my time, where I am on vacation and/or am sick.

So how do you think would be the best solution to achieve this?